How AI Music Can Power Personalized Audio Experiences in 2026

Music used to come to all of us the same way. Radio stations pumped out the same songs to millions of ears at once, albums rolled off the press identical for every buyer, and even early streaming was basically a giant shared shelf we all picked from. "Personalization" mostly meant building your own playlist or getting a few suggestions based on what people with similar taste were listening to.

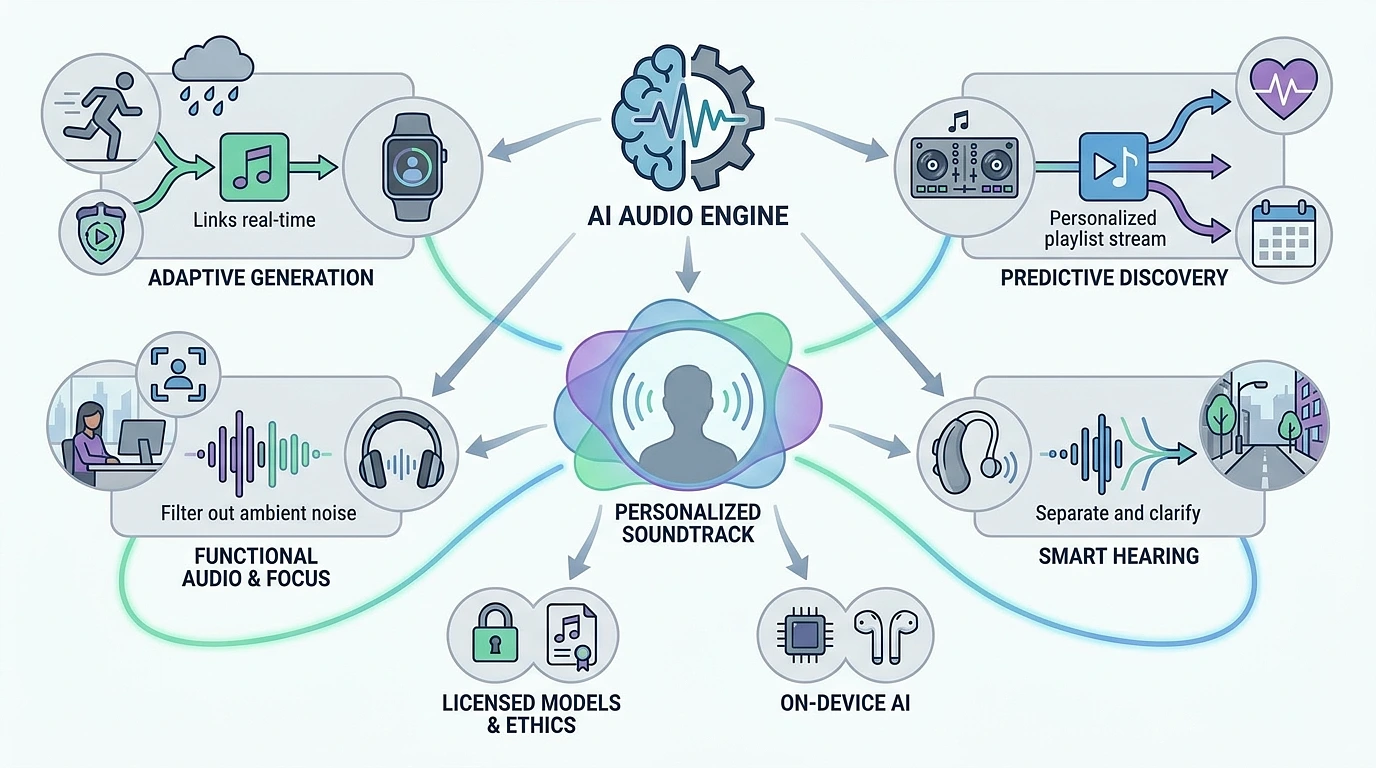

That era is gone. By 2026, AI has rewritten how audio gets made, delivered, and experienced. AI music isn't a party trick anymore it's the engine quietly running underneath a much more personal kind of listening, showing up in streaming apps, fitness gear, hearing aids, offices, and even radio.

And the change runs deep. Instead of pulling from a fixed catalog of songs recorded years ago, listeners in 2026 are getting audio that's built for them in the moment shaped by their mood, their body, where they happen to be, and what they've been into lately. Here's how it's actually playing out, what's leading the charge, and where the industry still has work to do.

What AI-Powered Personalized Audio Actually

- Generate brand-new music on the spot.

- Adjust existing songs based on what's happening around you.

- Build continuous listening sessions that evolve with you.

- Clean up real-world audio (conversations, street noise, ambient chaos) so you can focus or hear better.

The result feels less like a broadcast and more like a soundtrack that's actually paying attention.

1. Music That's Made, Not Just Picked

The biggest leap in 2026 is that AI has gone from choosing music to creating it.

Soundtracks That Read the Room

Today's systems spin up music that responds to whatever you're doing in real time. Your fitness watch picks up that you're starting to flag mid-run? The tempo lifts and the energy gets sharper. Rainy Sunday morning and your smart speaker knows it? Something soft and ambient takes over without you lifting a finger. Time of day, weather, location, activity it all feeds into a soundtrack that keeps reshaping itself.

Just Tell It What You Want

Endless playlist scrolling is starting to feel dated. You can just say what you're after:

"Make me a 30-minute chill R&B mix with female vocals, soft bass, and that nostalgic 2008 vibe."

The AI takes the prompt and pulls something together right away sometimes from licensed tracks, sometimes from generative output, often a blend of both.

Real-Time Generation

Tools like Mubert and Soundraw let you actually steer compositions through prompts, mood sliders, or activity tags. What you get is a piece of audio that didn't exist a minute ago and might never exist again. Genuinely one-of-a-kind sound.

2. Discovery That Actually Gets You

Recommendation engines have come a long way from sorting by genre and tempo. In 2026, they're picking up on the feel of a song the emotion, the texture, the structure and figuring out what's going to land for you on a deeper level.

Knowing What You Want Before You Do

By tracking what you skip, what you replay, how your body reacts, and even what time of day you tend to play certain things, AI can usually call your next move before you make it. For some listeners, hitting "play" is barely a thing anymore the right song just shows up.

AI DJs That Actually Talk to You

Features like Spotify DJ use generative AI to deliver something closer to a friend introducing tracks than a playlist on shuffle. A synthetic DJ tells you what's coming, explains the pick, and adjusts as it goes. You get the warmth of old-school radio with the precision of personalization.

Mood Mapping

Some of the sharpest work happening right now is around emotional transitions. AI can build musical arcs that move you from one headspace to another easing you down from a hard workout into something calming, or slowly lifting you out of a slump through a carefully sequenced shift in tone.

3. Audio That Works for Your Life

This isn't all entertainment. AI music is changing how sound actually functions in everyday life.

Focus Music That Pays Attention

Study and work soundtracks now respond to whether you're actually locked in. If your attention starts drifting, the AI might subtly tighten the rhythm or strip the melody back to pull you in again. Apps like Endel and Brain.fm have grown into something close to dynamic focus environments.

Hearing Aids That Got Smart

Hearing aids in 2026 have basically become intelligent listening companions. They run AI right on the device to separate voices from background noise in real time, automatically adjusting whether you're at a loud restaurant, walking down a busy street, or sitting in a quiet office. For millions of people, this isn't a fun feature it's how they navigate the world.

Radio, Reinvented

Radio itself is getting a makeover. AI is powering personalized, hyper-local, "augmented" stations where synthetic voices read news, weather, and traffic that's actually relevant to you, with songs picked just for your ears. The old shared experience of everyone hearing the same broadcast is giving way to millions of individual streams that somehow still feel like radio.

4. Where the Tech Stands and What's Still Hard

After a few rough early years full of lawsuits, mediocre output, and copyright headaches, the industry has been forced to grow up fast. By 2026, most of the focus is on building this stuff responsibly.

Properly Licensed Models

Major partnerships between AI developers and the big labels, publishers, and rights holders have produced models trained on cleared catalogs built to work with artists rather than rip them off. Paying for training data is starting to feel like the default, not the exception. And while generative tools handle the variations and the everyday filler, brands building apps, games, films, and signature audio identities still original music composers for the work that needs a real point of view, often pairing them with AI tools to speed up drafts and revisions.

Tagging the AI Stuff

Streaming services are now flagging AI-generated tracks. Some platforms quietly limit how much synthetic content shows up in discovery feeds to make sure human artists still get oxygen. Others let you decide for yourself whether you want AI music in the mix or filtered out entirely.

AI on Your Device

The shift that probably matters most under the hood is Hybrid Voice AI small language models running right on your phone, earbuds, or hearing aid. Faster reactions, better privacy, less leaning on the cloud. It's what makes truly personalized audio realistic at scale.

The Stuff Nobody's Solved Yet

Even with all the wins, real questions are still hanging in the air:

- How do you fairly pay artists whose work trained the model?

- Does generative music chip away at how much we value human creativity?

- If a song is made just for you, in that one moment who actually owns it?

These are the conversations shaping whatever comes next.

What Coming After 2026

Look past where we are now and the direction is pretty clear. AI music is going to keep weaving itself deeper into daily life. A few things worth watching:

- Biometric composition that responds to heart rate variability, stress signals, even brain activity.

- Cross-modal personalization where your music shifts in sync with what you're watching, reading, or playing.

- Human-AI collaboration where musicians treat AI as a creative partner instead of a threat.

- Always-on personal soundtracks an AI-made "score for your life" that follows you across devices and moments.

The point isn't that AI is going to replace human-made music. It's that AI is creating a whole new layer of sound adaptive, ambient, deeply personal that exists alongside the songs and artists we already love.